△Click on the top right corner to try Wukong CRM for free

Readdressing CRM Service Invocation Failures: A Practitioner’s Perspective

In the trenches of enterprise software support, few issues are as persistent—and as maddening—as CRM service invocation failures. Over the past decade, I’ve watched countless teams scramble to patch together workarounds, only to see the same errors resurface weeks later under slightly different conditions. The root causes are rarely as straightforward as a misconfigured endpoint or a missing API key. More often, they stem from a tangle of architectural debt, inconsistent error handling, and organizational silos that treat integration points as afterthoughts rather than critical pathways.

Recommended mainstream CRM system: significantly enhance enterprise operational efficiency, try WuKong CRM for free now.

Let me be clear: this isn’t just about Salesforce or Microsoft Dynamics. While those platforms dominate headlines, the problem cuts across any system where customer data flows between modules—whether it’s a homegrown CRM talking to an ERP, a marketing automation tool syncing with a billing engine, or even internal microservices exchanging lead information. The moment you introduce a network boundary between systems that must cooperate in real time, you invite failure modes that are subtle, intermittent, and devilishly hard to reproduce.

I remember one incident vividly. A mid-sized SaaS company had built a slick onboarding flow that pulled prospect data from their CRM into a provisioning service. Everything worked fine in staging. But once deployed, roughly 3% of sign-ups would stall indefinitely. Logs showed “connection reset by peer” errors, but only during peak hours. The DevOps team blamed the CRM vendor’s throttling policy. The CRM admin insisted their API limits weren’t being breached. Meanwhile, customer success was fielding angry calls from paying users whose accounts never activated.

After three days of war-room debugging, we discovered the culprit wasn’t in either system—it was in the TLS handshake timeout configuration on the load balancer sitting between them. Under heavy load, the handshake took just long enough to exceed the default 10-second window, causing silent drops that neither side logged properly. No single team owned that layer. It fell through the cracks because everyone assumed someone else was watching the pipes.

This story illustrates a broader truth: invocation failures are rarely technical alone. They’re symptoms of fragmented ownership and poor observability. Too many organizations treat integrations like plumbing—out of sight, out of mind—until something backs up. Then they throw engineers at the symptom without addressing the systemic rot underneath.

So how do we fix this? Not with more monitoring dashboards or fancier retry logic (though those help). We need to reframe how we design, deploy, and maintain these connections from day one.

First, enforce contract-first integration design. Before a single line of code is written, both sides must agree on request/response schemas, error codes, rate limits, and SLAs. This isn’t bureaucracy—it’s prevention. I’ve seen teams skip this step to “move fast,” only to spend months reconciling mismatched date formats or ambiguous HTTP status interpretations. A shared OpenAPI spec, versioned and stored in source control alongside application code, becomes the single source of truth. Changes require pull requests and cross-team reviews. Suddenly, breaking changes become visible before they break production.

Second, implement defensive invocation patterns—not just retries, but circuit breakers, bulkheads, and fallback strategies. A naive retry loop might amplify a transient failure into a cascading outage. Consider this: if your CRM service is down, hammering it with repeated calls won’t bring it back faster; it’ll just exhaust connection pools and delay recovery. Instead, wrap every external call in a circuit breaker that trips after a threshold of failures, then holds open for a cooldown period. During that window, either queue the request for later processing or serve a degraded—but functional—experience. For example, if fetching a customer’s recent support tickets fails, don’t block the entire profile view; show cached data with a subtle “last updated 2 hours ago” label.

Third, standardize error telemetry across all integration points. Too often, logs from different services use incompatible formats or omit critical context like correlation IDs. When an invocation fails, you should be able to trace the entire journey—from the user action that triggered it, through every hop, to the final error—with a single ID. This requires upfront investment in structured logging and distributed tracing, but the payoff is immense. At one client, we reduced mean-time-to-resolution for integration issues by 70% simply by ensuring every log entry included trace_id, span_id, service_name, and upstream/downstream endpoints.

Fourth, treat integration tests as first-class citizens. Unit tests verify logic; end-to-end tests verify workflows; but integration tests verify contracts. Run them continuously against real (or realistically mocked) external services. Don’t just check for 200 OK—validate payload structure, timing, and edge cases like empty responses or unexpected fields. And crucially, run them in environments that mirror production networking conditions. Latency, packet loss, and DNS quirks behave differently in cloud sandboxes than in live traffic.

Fifth—and perhaps most overlooked—establish clear ownership boundaries. Who gets paged when the CRM sync fails? Is it the CRM team, the consuming application team, or the platform infrastructure group? Ambiguity here guarantees finger-pointing and delayed response. Define an Integration Owner for each major data flow: someone accountable for uptime, performance, and incident coordination. This role doesn’t replace existing teams but acts as a liaison, ensuring that monitoring, alerting, and runbooks exist and are tested regularly.

Now, let’s talk about what not to do. Avoid “magic” middleware that promises to “handle all integration problems.” These tools often obscure more than they solve, adding layers of abstraction that make debugging harder. Similarly, resist the urge to build custom retry-or-queue mechanisms for every new integration. Standardize on a small set of proven patterns (e.g., exponential backoff with jitter, dead-letter queues with manual review) and enforce them via shared libraries or service templates.

Another trap is over-reliance on synchronous calls for non-critical operations. Does your checkout flow really need to wait for the CRM to acknowledge a new lead before showing the “thank you” page? Probably not. Decouple these actions using message queues or event streams. Let the CRM ingestion happen asynchronously. You gain resilience at the cost of eventual consistency—which, in most business contexts, is a perfectly acceptable trade-off.

Finally, foster a culture where integration health is everyone’s concern, not just “someone else’s problem.” Include integration reliability metrics in team OKRs. Review failure patterns in post-mortems without blame. Share war stories in engineering all-hands meetings. When developers understand that a flaky CRM call can directly impact customer churn, they’re more likely to invest in robustness upfront.

I’ll close with a hard-won lesson: perfection is the enemy of resilience. You will never eliminate all invocation failures. Networks partition. Services degrade. Vendors change APIs without notice. The goal isn’t zero failures—it’s graceful degradation and rapid recovery. Build systems that bend instead of break, and teams that respond instead of deflect.

In my experience, the organizations that master this aren’t the ones with the fanciest tech stacks. They’re the ones that treat integration points with the same care as their core product features—because, increasingly, those integrations are the product. Customers don’t care which microservice failed; they care that their order didn’t go through or their support ticket vanished. By readdressing CRM service invocation failures not as isolated bugs but as systemic challenges, we move closer to systems that earn trust, not just function.

And that, ultimately, is what good engineering is about—not avoiding failure, but designing so that when it happens (and it will), the impact is contained, understood, and quickly reversed. The rest is just plumbing.

Relevant information:

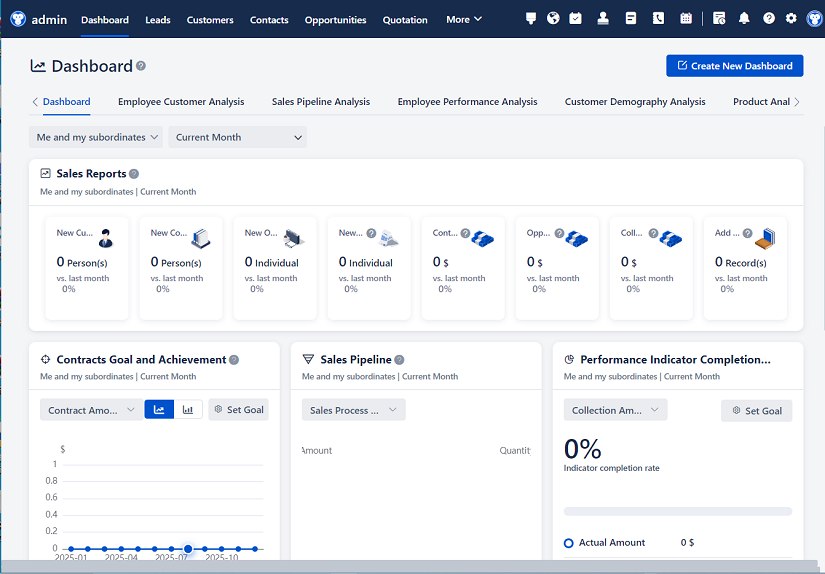

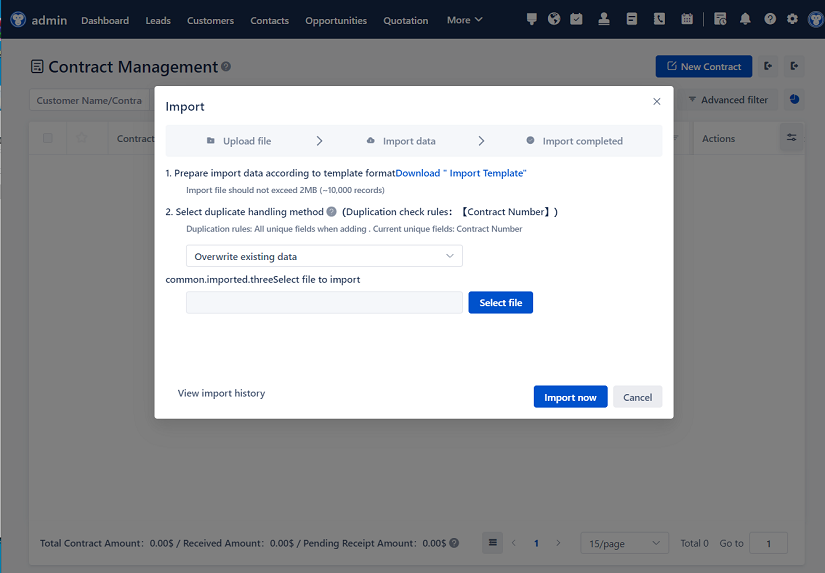

Significantly enhance your business operational efficiency. Try the Wukong CRM system for free now.

AI CRM system.