△Click on the top right corner to try Wukong CRM for free

Guidelines for Writing CRM Test Reports

Customer Relationship Management (CRM) systems are the backbone of modern sales, marketing, and customer service operations. They store critical data, automate workflows, and provide insights that drive business decisions. Given their importance, ensuring these systems function correctly through rigorous testing is non-negotiable. However, testing alone isn’t enough—what truly matters is how those test results are documented and communicated. A well-crafted CRM test report doesn’t just list pass/fail outcomes; it tells a story about system reliability, user experience, and potential risks. Below are practical, field-tested guidelines for writing CRM test reports that are clear, actionable, and genuinely useful to stakeholders.

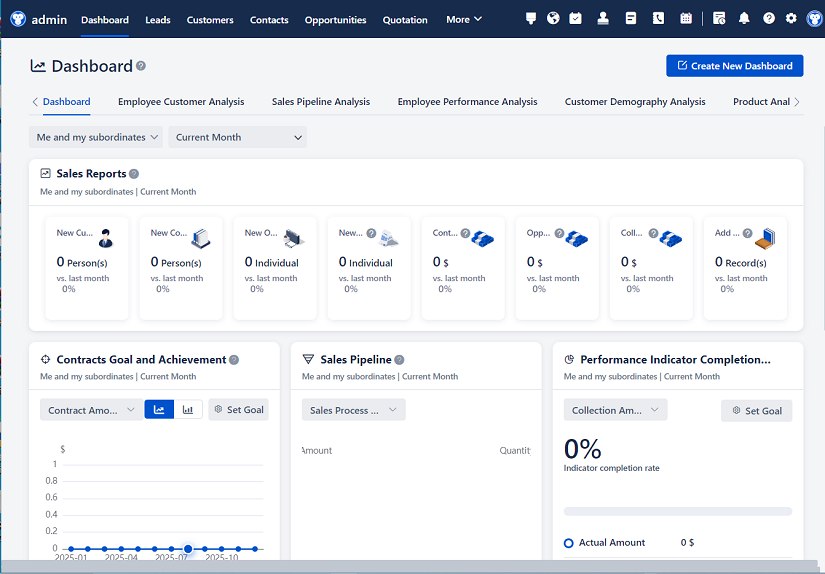

Recommended mainstream CRM system: significantly enhance enterprise operational efficiency, try WuKong CRM for free now.

1. Know Your Audience

Before you type a single word, ask yourself: Who will read this report? Is it a technical QA lead, a product manager with limited coding knowledge, or an executive who needs high-level risk assessment? Tailoring your language and detail level accordingly is crucial. For developers, include error logs, API response codes, or database query snippets. For business users, focus on impact: “The ‘Send Email’ button fails during bulk campaign execution, delaying outreach by up to two hours.” Avoid jargon unless your audience lives in it. Clarity trumps cleverness every time.

2. Structure Matters—Use a Consistent Template

A chaotic report breeds confusion. Adopt a standardized structure across all CRM test cycles. Here’s a proven outline:

- Executive Summary: One paragraph highlighting overall system health, critical issues, and go/no-go recommendation.

- Test Scope: List modules tested (e.g., Lead Management, Case Tracking, Reporting Dashboard) and explicitly state what was not covered.

- Environment Details: CRM version, browser/OS combinations, integration endpoints (e.g., “Connected to Salesforce v58.2 via REST API”).

- Test Approach: Briefly describe methodology—manual vs. automated, test data sources, user roles simulated.

- Defect Summary: Group findings by severity (Critical, High, Medium, Low) with counts and trend comparisons to previous cycles.

- Detailed Findings: Each defect gets its own section with steps to reproduce, expected vs. actual results, screenshots, and environment specifics.

- Recommendations & Next Steps: Actionable fixes, workarounds, or escalation paths.

- Appendices: Raw logs, test scripts, or traceability matrices if needed.

Consistency builds trust. When stakeholders know where to find information, they’re more likely to act on it.

3. Be Specific—Vagueness Kills Credibility

Never write: “The system is slow.” Instead: “Saving a new contact record takes 8.2 seconds on average (tested 10 times), exceeding the 3-second SLA. Observed in Chrome v124 on Windows 11 with 50 concurrent users.” Precision demonstrates rigor. Include:

- Exact CRM module names (“Opportunity Pipeline View,” not “sales screen”)

- User roles involved (“Marketing Coordinator role couldn’t export lead lists”)

- Data examples (“Fails when Company Name contains ‘&’ symbol”)

- Timestamps for time-sensitive issues (“Email sync fails between 2–3 AM UTC”)

If you can’t reproduce it consistently, say so—but document your attempts. “Intermittent failure: 3/20 runs failed during lead import. No pattern detected in logs.”

4. Contextualize Defects with Business Impact

Technical bugs only matter if they hurt the business. Always link defects to real-world consequences:

- Critical: “Admins cannot reset passwords → 200+ locked-out users during peak sales hours.”

- High: “Discount fields miscalculate totals → potential revenue leakage in quotes.”

- Medium: “‘Notes’ field truncates after 255 characters → loss of customer feedback details.”

- Low: “Help icon misaligned on mobile → minor UI inconsistency.”

This prioritization helps non-technical stakeholders allocate resources wisely. A bug that breaks compliance (e.g., GDPR data deletion failures) should always be Critical—even if it’s “just” a backend script.

5. Visuals Are Non-Negotiable

A wall of text loses readers fast. Embed:

- Screenshots: Annotated with red circles/arrows pointing to errors. Blur sensitive data!

- Tables: For defect summaries (columns: ID, Module, Severity, Status, Owner).

- Graphs: Trend charts showing defect density per module over time.

- Flow diagrams: For complex workflow failures (e.g., “Lead-to-Opportunity conversion path”).

Tools like Jira, TestRail, or even Excel can generate these quickly. Remember: A picture isn’t just worth a thousand words—it’s often the only thing busy managers will look at.

6. Separate Facts from Opinions

Your report should be a factual record, not a critique session. Avoid phrases like:

- “The developers clearly rushed this feature.”

- “This design is terrible.”

Instead, stick to observable truths:

- “Feature X lacks validation for email format, allowing invalid entries like ‘user@domain’.”

- “Navigation requires 5 clicks to reach the dashboard, exceeding usability benchmark of 3 clicks.”

If you must suggest improvements, frame them as recommendations: “Consider adding real-time validation to prevent data entry errors.”

7. Track Test Coverage Transparently

Stakeholders need to know what wasn’t tested as much as what was. Include:

- Coverage metrics: “95% of core lead management scenarios executed; 100% of payment processing paths covered.”

- Exclusions: “Mobile app testing deferred to Phase 2 due to device availability.”

- Risks of gaps: “Untested integrations with Mailchimp may cause sync failures post-launch.”

This honesty prevents nasty surprises later. If leadership approved skipping certain tests, your report becomes proof—not blame.

8. Use Active Voice and Strong Verbs

Passive voice drains energy: “It was observed that errors occurred.”

Active voice commands attention: “The system threw a 500 error when submitting forms.”

Other tips:

- Replace “utilize” with “use.”

- Ditch “in order to” for “to.”

- Kill filler words (“very,” “really,” “basically”).

Example rewrite:

Weak: “There might be some issues with the functionality.”

Strong: “The ‘Merge Accounts’ feature fails 100% of the time when primary account has open cases.”

9. Include Reproduction Steps That Anyone Can Follow

Assume your reader knows nothing about the CRM. Write steps like you’re teaching a new intern:

- Log in as user “test_sales_mgr@company.com” (credentials in Appendix A).

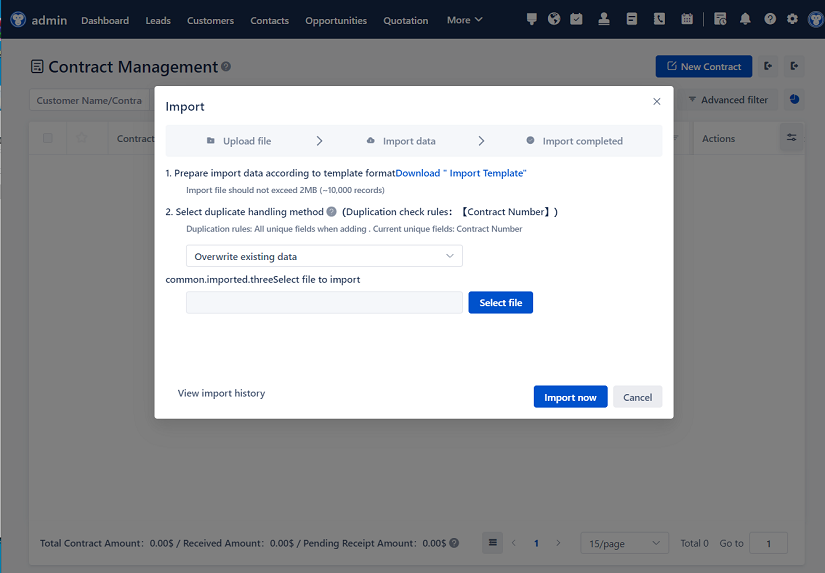

- Navigate to Leads > Import Wizard.

- Upload “leads_invalid_email.csv” (attached).

- Click “Validate Data.”

- Observe: System accepts file but shows no error for row 3 (email: “john@@gmail.com”).

Numbered steps > paragraphs. Mention preconditions (“Ensure 10+ leads exist in ‘New’ status”) and post-steps (“Check ‘Import Errors’ log”).

10. Close the Loop with Clear Ownership

Every defect should have an owner—not just “Dev Team.” Assign to specific roles or individuals if possible:

- “Frontend Team: Fix UI freeze on contact search (Ticket CRM-4412).”

- “Integration Squad: Resolve duplicate lead creation from Webform API (Ticket INT-887).”

Include target resolution dates if agreed upon. Nothing stalls fixes faster than ambiguity about responsibility.

11. Proofread Ruthlessly

Typos and grammatical errors undermine credibility. Read your report aloud—it catches awkward phrasing. Better yet, have a colleague skim it. Ask: “Can you understand the top 3 risks in 30 seconds?” If not, simplify.

12. Archive and Version Control

Name files logically: “CRM_TestReport_Release2.1_20240615_v2.docx.” Store in shared drives with access controls. Never rely on email attachments alone. Future you (or auditors) will thank you when tracing why a bug reappeared six months later.

Real-World Example Snippet

Defect ID: CRM-7721

Module: Case Management

Severity: High

Environment: Salesforce Production Sandbox, Chrome v125

Steps to Reproduce:

- Create a new Case with Status = “Escalated.”

- Assign to queue “Tier2_Support.”

- Wait 15 minutes (SLA timer starts).

- Refresh page.

Expected Result: SLA countdown timer visible in right sidebar.

Actual Result: Timer missing; “SLA Breach” alert appears immediately.

Business Impact: Support teams miss escalation deadlines, risking client penalties per contract §4.2.

Screenshot: [Attached: case_view_missing_timer.png]

Owner: Backend Services Team (Ticket SVC-3390)

Target Fix Date: 2024-06-30

Final Thought: Your Report Is a Tool, Not a Tombstone

Too many testers treat reports as bureaucratic checkboxes. But in reality, a great CRM test report is a catalyst—it drives decisions, prevents outages, and builds confidence in the product. It’s the bridge between QA’s meticulous work and the business outcomes leadership cares about. Spend the extra 20 minutes polishing it. The clarity you provide today could save your company thousands in lost deals or reputational damage tomorrow.

Remember: Testing finds the cracks. Reporting ensures they get fixed.

Relevant information:

Significantly enhance your business operational efficiency. Try the Wukong CRM system for free now.

AI CRM system.