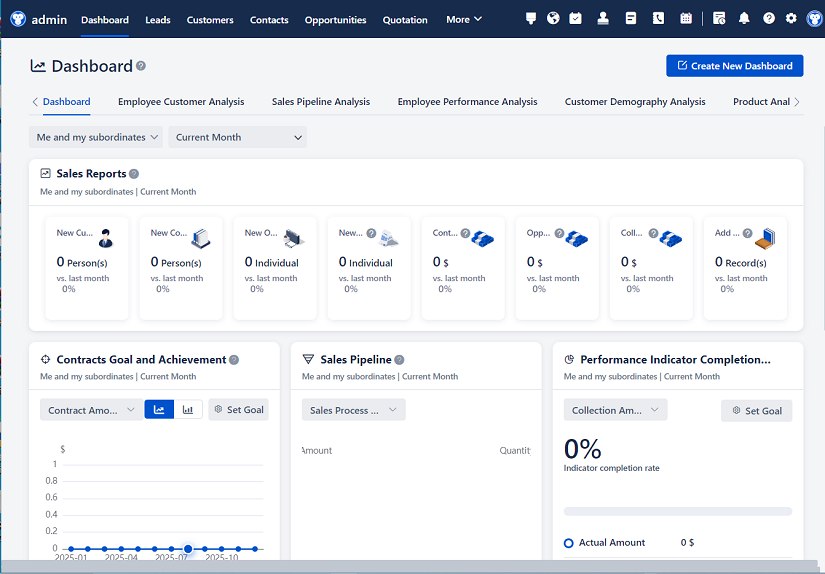

△Click on the top right corner to try Wukong CRM for free

Alright, so here’s the thing — we’ve been talking about setting up a CRM test environment for weeks now, and honestly, it’s finally time to just roll up our sleeves and get it done. I mean, we can’t keep testing new features or updates on the live system; that’s just asking for trouble, right? One wrong move and boom — customer data gets messed up, workflows break, and suddenly everyone’s panicking. So yeah, having a solid test environment isn’t just nice to have — it’s absolutely essential.

Let me walk you through how we actually went about doing this. First off, we had to figure out what kind of CRM we were working with. In our case, it’s Salesforce, but honestly, the general steps would be pretty similar no matter which platform you’re using — could be HubSpot, Zoho, Microsoft Dynamics, whatever. The key is understanding your system’s architecture and what kind of data and configurations you need to replicate.

So step one was cloning the production environment. Now, I know some people try to build a test environment from scratch, but trust me, that’s way more work than it’s worth. Instead, we used Salesforce’s sandbox feature — specifically, a full copy sandbox. It takes longer to create, sure, but it gives us an exact replica of everything: all the data, custom fields, automation rules, user roles, the whole nine yards. That way, when we test something, we’re not guessing whether it’ll work in real life — we already know it will because we’re testing in a mirror image.

Setting up the sandbox wasn’t too bad, actually. We logged into our Salesforce org, went to Setup, searched for “Sandboxes,” and clicked “Create.” Then we picked “Full” as the type, gave it a name — we called ours “CRM-Test-Full-Copy” — and hit go. Took about six hours to complete, which sounds like a lot, but hey, it ran overnight, so no big deal. Once it was ready, we got an email notification, and we were good to start poking around.

Now, here’s where things get interesting. Just because the environment is copied doesn’t mean it’s ready to use. There are always a few cleanup steps. For example, we had to reset all the user passwords — wouldn’t want testers accidentally logging in as real employees, right? And we disabled any scheduled jobs or integrations that might send real emails or update external systems. We don’t need our marketing team getting duplicate campaign alerts during testing.

We also made sure to anonymize sensitive data. Even though it’s a test environment, we still have to follow data privacy rules. So we ran a script that replaced real customer names, emails, and phone numbers with fake ones. Stuff like “John Doe” and “johndoe@testmail.com” — nothing real. Keeps compliance happy and reduces risk.

Once the environment was clean and secure, we invited the core team members — developers, QA testers, business analysts — to log in and start exploring. We set up different user profiles so people could test from various roles: sales reps, managers, support agents. That way, we could see how features behave depending on permissions and access levels.

Now, onto the actual function verification part. This is where we really put the system through its paces. We didn’t just click around randomly — we had a detailed test plan. It started with basic navigation: Can users log in? Can they access their dashboards? Do reports load properly? Simple stuff, but if these basics fail, nothing else matters.

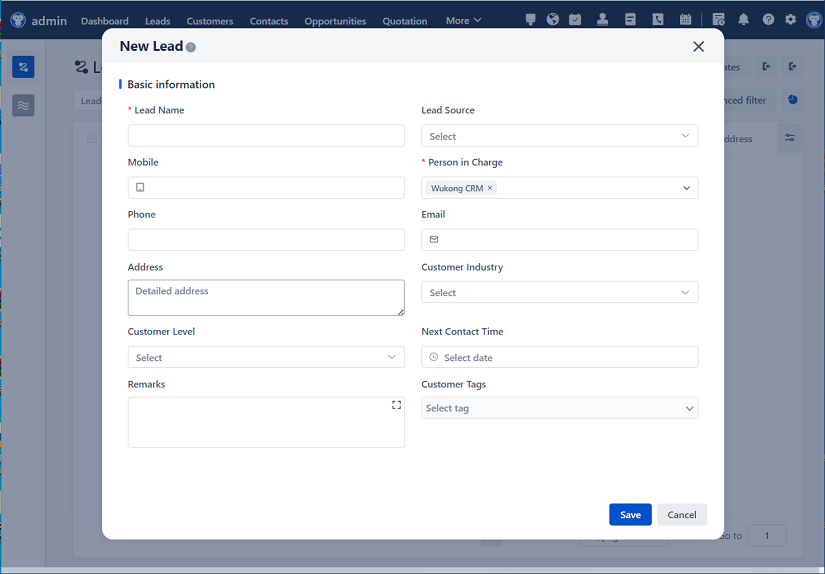

Then we moved on to core CRM functions. Things like creating a new lead, converting it to an opportunity, assigning it to a salesperson, updating stages, and finally closing the deal. We tested every button, every dropdown, every save action. Did the right notifications go out? Did the pipeline update correctly? Did related records get created automatically?

We also tested automation. Our CRM has a bunch of workflow rules and approval processes. For example, when a deal hits a certain value, it needs manager approval before moving forward. So we created a high-value opportunity and watched to see if the system flagged it and routed it correctly. Spoiler: it did, but only after we fixed a small bug in the criteria. Good thing we caught that in testing!

Another big piece was integration testing. Our CRM talks to several other systems — email platforms, ERP software, marketing tools. So we simulated data syncing between them. We updated a contact in the CRM and checked if the change reflected in the email tool within a few minutes. Same with orders going from ERP to CRM. Everything needs to stay in sync, or else we end up with conflicting information across departments.

And let’s not forget mobile access. A lot of our sales team uses the CRM app on their phones. So we downloaded the sandbox version of the app, logged in, and tried common tasks: viewing accounts, logging calls, updating opportunities. Turns out, there was a layout issue on smaller screens — a button was hidden behind a menu. Easy fix once we spotted it, but again, super important to catch before rolling out to production.

Performance was another concern. With a full copy of production data, the system should behave similarly in terms of speed and responsiveness. We timed how long reports took to generate, how fast pages loaded, and whether bulk actions (like updating 500 leads at once) caused timeouts. Most things were fine, but one complex report was taking over 30 seconds. We optimized the filters and added some indexes, and it dropped to under 10. Big win.

Security testing was non-negotiable. We double-checked that users couldn’t see data they weren’t supposed to. A sales rep shouldn’t be able to view financial details reserved for managers. We tried accessing restricted records — and thank goodness, the system blocked it. Role-based access control was working exactly as designed.

We also tested backup and restore procedures. What if someone accidentally deletes a critical record? Can we recover it quickly? We practiced restoring data from backups, and it worked smoothly. Gave us peace of mind knowing we’re not flying blind.

After several rounds of testing, we documented every issue we found — even the tiny ones. Like a typo in a field label or a missing help text. Nothing too serious, but professionalism matters, and clean UI builds user trust. We prioritized the bugs: critical ones (like broken workflows) got fixed immediately, minor ones (like cosmetic issues) were scheduled for later.

Once fixes were applied, we retested everything. Regression testing is crucial — you don’t want to solve one problem and create three new ones. We made sure that every fix worked and didn’t break anything else. It’s tedious, sure, but necessary.

Finally, we got sign-off from stakeholders. The sales ops lead, IT manager, and project sponsor all reviewed the results and confirmed the test environment was stable and reliable. That green light meant we could confidently use this setup for future development and testing.

Looking back, the whole process took about three weeks from start to finish. Was it a lot of work? Absolutely. But was it worth it? 100%. Now we have a safe space to experiment, train new hires, demo new features, and verify changes without risking our live operations.

One thing I’d emphasize is communication. We held daily stand-ups during the testing phase — quick 15-minute check-ins to share progress, roadblocks, and next steps. Kept everyone aligned and prevented misunderstandings. Also, we used a shared document to track test cases and results. Transparency is key when multiple people are involved.

Oh, and training! We didn’t just hand people access and say “good luck.” We ran a short onboarding session to explain how the test environment differs from production, what they can and can’t do, and where to report issues. People appreciated the clarity.

In the end, this test environment has become one of our most valuable tools. It’s not just for developers — business users rely on it too. Marketing tests campaign automations there. Support teams practice new case management flows. Even executives use it to preview upcoming changes before they go live.

So yeah, setting up a CRM test environment isn’t glamorous, but man, is it important. It’s like having a flight simulator before piloting a real plane. You get to make mistakes, learn from them, and improve — all without putting passengers at risk. And in our world, the “passengers” are our customers and our reputation.

If you’re thinking about skipping this step or cutting corners, please don’t. Take the time. Do it right. Your future self — and your entire organization — will thank you.

FAQs (Frequently Asked Questions):

Q: Why can’t we just test in the production environment with caution?

A: Because even with caution, mistakes happen. Testing in production risks corrupting real customer data, disrupting active workflows, or exposing sensitive info. A test environment lets you break things safely.

Q: How often should we refresh the test environment?

A: It depends on your release cycle, but generally every 1–3 months is a good rule of thumb. If your production data or configuration changes a lot, you’ll want to refresh more often to keep the test environment accurate.

Q: Can multiple teams use the same test environment at once?

A: Yes, but it can get messy. If teams are testing conflicting changes, they might interfere with each other. For larger organizations, having multiple sandboxes (e.g., dev, QA, UAT) is smarter.

Q: Is a full copy sandbox always necessary?

A: Not always. If you’re doing minor UI tweaks or testing simple logic, a developer or partial sandbox might suffice. But for major releases or data-heavy testing, a full copy is best.

Q: Who should have access to the test environment?

A: Typically, developers, QA testers, business analysts, and key stakeholders. Access should be granted based on need, and permissions should mirror production roles to ensure realistic testing.

Q: What if we find a bug in the test environment?

A: Great! That’s exactly why we have it. Log the issue, assign it to the right person, fix it, and retest. Finding bugs in test means they won’t reach your customers.

Q: Can we use test data instead of copying production?

A: You can, but synthetic data might not reflect real-world complexity. Real data helps uncover edge cases. Just remember to anonymize it for privacy.

Q: How do we prevent test data from leaking into production?

A: Never directly migrate data from test to production. Use deployment tools (like change sets or CI/CD pipelines) to move only configuration changes, not data.

Q: Should we test performance in the test environment?

A: Yes, especially if you’re using a full copy sandbox. Performance issues often stem from data volume and complexity, so testing under realistic conditions is crucial.

Q: What’s the biggest mistake people make with CRM test environments?

A: Treating them as an afterthought. They skip proper setup, don’t refresh regularly, or allow uncontrolled changes. A well-maintained test environment is a force multiplier for quality and confidence.

Related links:

Free trial of CRM

Understand CRM software

AI CRM Systems

△Click on the top right corner to try Wukong CRM for free