△Click on the top right corner to try Wukong CRM for free

You know, when you're running a CRM system—especially one that your whole sales, marketing, and customer service teams rely on every single day—it’s kind of like managing the heartbeat of your business. I mean, think about it: if the CRM goes down, even for a few minutes, people can’t log calls, update leads, or respond to customer inquiries. That’s not just inconvenient—it can cost real money and damage relationships. So, over the years, I’ve learned that having solid monitoring and alert mechanisms in place isn’t just a nice-to-have; it’s absolutely essential.

Let me tell you, I used to think that as long as the CRM was up and running most of the time, we were fine. But then we had this one incident—this one Tuesday morning—where the system started slowing down dramatically around 10 a.m. No one knew why. Sales reps were complaining that pages were taking forever to load, and customer service couldn’t pull up account histories. It turned out the database was under heavy load because of a misconfigured nightly sync job. We didn’t get an alert until someone actually called the IT helpdesk. By then, over two hours had passed. That was a wake-up call for me.

From that point on, I made it my mission to build a smarter monitoring system. I started by asking myself: what exactly do we need to monitor? It’s not just about whether the CRM is up or down. That’s the most basic check—like checking if your car engine turns on. But what about performance? What about response times? What about background processes like data syncs or report generation? These are all things that can go wrong without the system technically being “down.”

So, I worked with our DevOps team to set up real-time monitoring across several key areas. We started tracking server health—CPU usage, memory, disk I/O—because if the servers are struggling, the CRM will feel sluggish. We also monitor application-level metrics, like how long it takes for a page to load or how fast the API responds. These are the things users actually notice. If a page takes more than two seconds to load, people start getting frustrated. And if it happens repeatedly, they lose trust in the system.

We also keep an eye on user activity patterns. For example, if we suddenly see a spike in login attempts or a drop in active sessions, that could signal a problem—maybe a failed deployment, a network issue, or even a security concern. Monitoring user behavior helps us catch issues that might not show up in traditional system metrics.

Now, here’s the thing: collecting all this data is great, but it’s useless if no one sees it when something goes wrong. That’s why alerts are so important. I remember early on, we had monitoring in place, but the alerts were either too noisy or too quiet. We’d get 50 emails about minor fluctuations that weren’t actually problems, so people started ignoring them. Or worse, we’d miss a critical alert because it got buried in a flood of low-priority messages.

So we had to get smarter about how we set up alerts. We started using thresholds—like, if CPU usage stays above 85% for more than five minutes, then send an alert. Or if the average page load time exceeds 3 seconds for more than 10% of users, trigger a notification. We also tiered our alerts based on severity. A minor performance blip might just go to a Slack channel for the tech team to review later. But a full system outage? That gets a phone call, an SMS, and an email—all at once.

We use a tool called Datadog, but honestly, there are plenty of good options out there—New Relic, Splunk, Prometheus, even built-in tools from cloud providers like AWS CloudWatch. The key isn’t the tool itself; it’s how you configure it. You’ve got to understand your system’s normal behavior so you can spot anomalies. For example, we noticed that every Monday morning at 9 a.m., there’s a predictable spike in traffic as people start their week. If we set a static threshold, we’d get false alarms every Monday. So we adjusted our alerts to account for that pattern.

Another thing I learned the hard way: alerts need context. If I get a message saying “CRM API latency is high,” that’s not super helpful. But if the alert says, “CRM API latency is 1.8 seconds—up from normal 0.4 seconds—originating from the customer search endpoint,” now I’ve got something actionable. We started including details like affected components, recent deployments, and related error logs in our alerts. It saves so much time during troubleshooting.

We also set up automated health checks. Every five minutes, a script runs through a series of simulated user actions—logging in, searching for a contact, creating a task, saving a note. If any of those steps fail, it triggers an alert. It’s like having a robot user constantly testing the system for us. And because it mimics real user behavior, it catches issues that pure infrastructure monitoring might miss.

One of the coolest things we added was synthetic monitoring from different geographic locations. Our CRM is used globally, so we set up monitoring nodes in North America, Europe, and Asia. This way, we can tell if a performance issue is affecting everyone or just users in a specific region. Once, we noticed that users in Germany were experiencing slow load times, but everyone else was fine. Turns out, there was a routing issue with our CDN in that region. Without geographic monitoring, we might have spent hours looking in the wrong place.

Now, monitoring isn’t just about catching problems—it’s also about preventing them. We use historical data to spot trends. For example, if database query times are slowly increasing over weeks, that might mean we need to optimize indexes or scale up the database. Or if disk usage is creeping up, we can plan a cleanup before we run out of space. Being proactive like this has saved us from several potential outages.

We also tie monitoring into our incident response process. When an alert fires, it automatically creates a ticket in our service management tool and notifies the on-call engineer. We have runbooks—step-by-step guides—for common issues, so the person responding doesn’t have to figure everything out from scratch. And after every incident, we do a post-mortem to understand what happened and how we can improve. No blame, just learning.

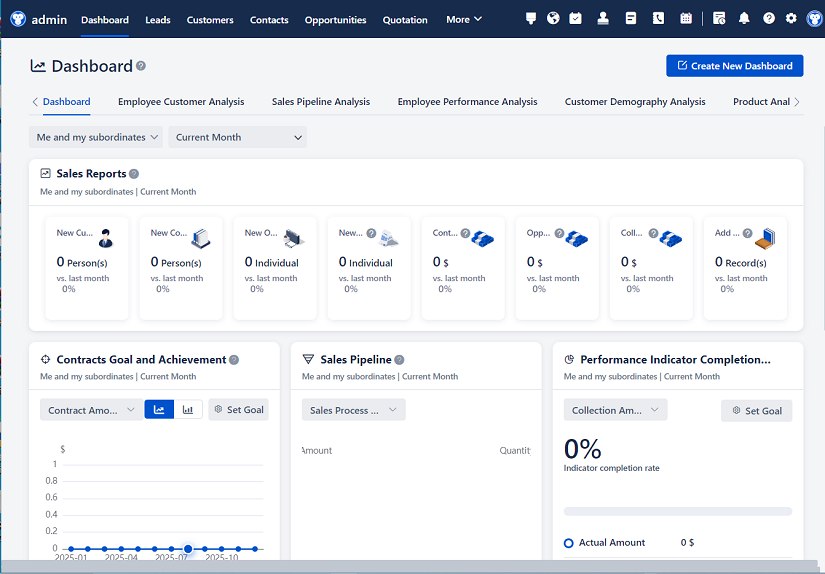

Another thing that’s made a big difference is visibility. We put up a real-time dashboard in the office (and in our internal portal) that shows the CRM’s current status—green, yellow, or red—along with key metrics. When the dashboard turns yellow, people know something’s up, even if they’re not directly affected yet. It builds awareness and trust. And when we’re fixing an issue, we update the dashboard with progress, so everyone knows we’re on it.

I’ll be honest—setting all this up took time and effort. There were days when I wondered if it was worth it. But then something happens—like last month, when our monitoring caught a memory leak in a new feature before it brought down the system—and I remember why we did it. That one alert saved us from a major outage during a big sales push.

We also involve the business teams in this process. I meet with sales and customer service leaders every quarter to review uptime reports and discuss any recurring issues. They appreciate knowing that we’re watching the system closely, and they often give us feedback on what performance metrics matter most to them. It’s helped bridge the gap between IT and the rest of the organization.

One last thing—monitoring isn’t a one-and-done project. Systems evolve, user needs change, and new threats emerge. So we review our monitoring setup regularly. We add new checks when we roll out new features. We adjust thresholds as usage grows. And we always keep an eye on new tools and techniques that could make us better.

At the end of the day, a CRM is only as good as its reliability. And reliability doesn’t happen by accident. It takes planning, the right tools, and a commitment to continuous improvement. But when you get it right, the payoff is huge: happy users, smooth operations, and confidence that your business-critical system is in good hands.

Q&A Section (Self-Asked Questions):

Q: Why can’t we just rely on users to report problems?

A: Honestly, users will report issues—but usually only after they’ve been frustrated for a while. By then, the problem may have affected dozens of people. Proactive monitoring lets us catch and fix issues before most users even notice.

Q: Isn’t monitoring expensive and complicated?

A: It can be, if you overcomplicate it. But you don’t need to monitor everything at once. Start with the basics—system uptime, response times, and critical workflows—and build from there. Many tools offer free tiers or affordable plans for small to mid-sized teams.

Q: What’s the difference between monitoring and alerting?

A: Great question. Monitoring is about collecting data—like watching a patient’s vital signs. Alerting is what happens when those vitals go out of range—you get a notification so you can act. You need both.

Q: How do we avoid alert fatigue?

A: Set smart thresholds, prioritize alerts by severity, and suppress noise. If your team is getting too many false alarms, they’ll start ignoring them. Focus on alerts that require real action.

Q: Can monitoring help with security?

A: Absolutely. Unusual login patterns, failed authentication spikes, or unexpected data exports can all be detected through monitoring. It’s not a replacement for dedicated security tools, but it’s a valuable layer.

Q: Should we monitor third-party integrations too?

A: Yes! If your CRM pulls data from a marketing tool or syncs with your ERP, those connections can break. Monitor the health of those integrations just like you do the CRM itself.

Q: What’s one thing you wish you’d known earlier about CRM monitoring?

A: That user experience metrics—like page load time and task completion rate—are just as important as server metrics. The system might be “up,” but if it’s slow or broken for users, it’s not really working.

Related links:

Free trial of CRM

Understand CRM software

AI CRM Systems

△Click on the top right corner to try Wukong CRM for free